SEO Agency Melbourne

Search Engine Optimisation (SEO) is the process of improving your rankings for search engines like Google, Bing & Duck Duck Go. SEO requires adhering to three key requirements: Technical SEO requirements to make your site easily crawlable, avoiding spam or other spammy tactics and ensuring that you follow best practices.

We have helped many Australian companies grow organically in Google and other search engines via SEO. We helped a company add $2.8 million revenue in a year from their previous revenue of $420K per year via SEO.

Many websites have poor technical SEO, slow speed, crawl errors and other issues in their Google search console (GSC). This makes the user experience poor and in turn also impacts your keyword or search rankings.

4.7 out of 5 from 200+ reviews

Search Engine Optimisation

Search Engine Optimisation (SEO) is the process of improving site rankings for search engines like Google, Bing & Duck Duck Go. A winning SEO strategy requires crafting unique & valuable content, improving the crawlability of the site so Google can read it and understand it and improving the user experience to consume the content. It also requires conducting A/B tests and experiments to improve the overall website performance.

In order to improve your site, you will need to

- Conduct in-depth technical SEO audits to identify crawl issues

- Optimise your website for search engines crawlability

- Improve crawlability to improve indexing and rankings for your site

- Implement schema and structured data

- Improve website speed, security and usability of the site

- Address technical issues like crawl errors and duplicate content

- Improve each page and document structure

What you need is a framework that focuses on SEO organic rankings without taking any shortcut. A digital marketing roadmap that promises long term growth. A plan that iteratively improves your website so that Google and other search engines can crawl and understand it better.

Whether you’re a startup or an established enterprise, implementing effective SEO strategies can lead to an increase in organic traffic and a boost in inquiries/sales.

You are in good company

Our SEO services will include everything your site needs to rank.

Don’t worry about paying for web developer separately to help manage SEO implementation changes for structured data or hierarchical template changes. Our SEO audit will uncover everything and our SEO services will implement the roadmap the audit outlines.

- Page speed improvements

- Mobile responsiveness

- Google Tag Manager setup

- Google Analytics setup

- Keyword research

- Keyword mapping

- Website caching setup

- On-Page SEO

- XML sitemaps

- Robots.TXT

- Page metadata

- Meta tags validation

- Document outlines

- Alt text improvements

- Structured data

- Headings hierarchy

- Template improvements

- Content improvements

- Content Calendar

- Competitor analysis

- Organic link campaigns

- In-depth reporting

- Local citations

- NAP verification & fixes

- Link building campaign

- E-E-A-T optimisation

- SERP feature schema optimisation

- Rank tracking & reporting

Top SEO services in Australia

Our SEO services focuses on keywords research, competitive analysis, site architecture, conversion optimisation via UX/UI improvements & overall revenue improvements.

Over the last 15 years, Mojo Dojo has worked in over 81 different verticals, in 18 languages, and in 45 different countries, helping some of the world’s most sophisticated companies run laser targeted SEO campaigns designed for greatest revenue impact.

We don’t believe in winning awards. The best craftsman in their art never seek external validation of their art.

Keyword Research

We pick the most relevant keywords for your business based on a number of considerations including: competitive analysis, search intent, keyword type, keyword themes and highly profitable keyword opportunities. On an average we will spend over 8 hours just looking at your and your competitors keywords to understand your consumer behavior. We understand how and what they search.

Technical SEO

Our team has driven SEO campaigns for fortune 500 companies. Our tech SEO is centered around: Google Analytics audit, SEO audit, schema & structured data setup, site architecture, 301 redirects and 404 pages management & other server related optimisation. The most important part is to uncover and look at what Google is reading and understanding.

On-page SEO

Our on-page SEO optimisations refines your pages and improves the relevance of the page topic. We do this by improving: Title tags, meta descriptions, headings, document outline, copy, URLs, user experience, media and other on-page elements.

We also focus heavily on keyword cannibalization, featured snippet optimisation, content audit including content pruning. We will also give you recommendations on a full cycle content calendar including how to get E-E-A-T optimisation.

Off-page SEO

Off-page SEO (search engine optimisation) involves initiatives to enhance a website’s organic search engine visibility and credibility, without modifying the website’s content or structure. This includes cultivating high-quality backlinks through targeted link building program.

We focus on:

- Link building

- Backlink audit

- App store optimisations

- Google My Business optimisation

- Local citations improvements

- Local citation building

- Organic link campaigns

SEO consulting

Mojo Dojo has been involved in a number on consulting projects with larger organisations that have developed in-house team for their online marketing.

We provide consulting on a number on SEO related core-areas:

- On-Page SEO consulting

- Speed improvements

- Page speed & lighthouse consulting

- SEO strategic review

- SEO program development

- Link building consulting and implementation

- SEO training

Our consulting services are useful for businesses that

- have clear goals and objectives for organic traffic growth

- are scaling their business

- have limited in-house expertise

- want to augment their expertise

- are revamping, replatforming or migrating their website

- are implementing a new content strategy

We have written extensive SEO audit reports for organisations that have over 5 million pages and have over 10 million visitors per month.

Whether you are just looking for specific SEO advice, looking for SEO specialists or are seeking an extensive topical map for your SEO, we can help.

Search Engine Optimisation Results

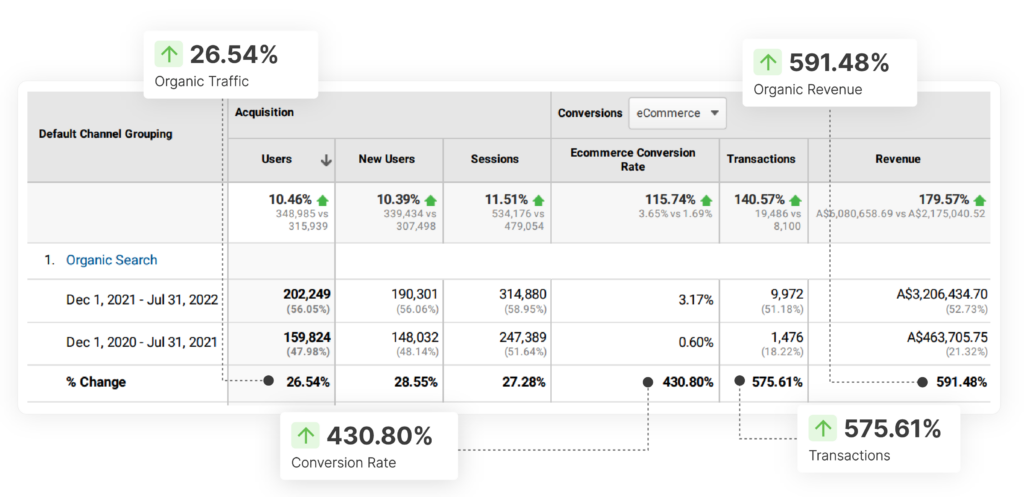

Contextual SEO for a retail company

Contextual SEO raised revenue of a company by $2.8 million in 7 months with a 26% increase in high intent keywords SEO.

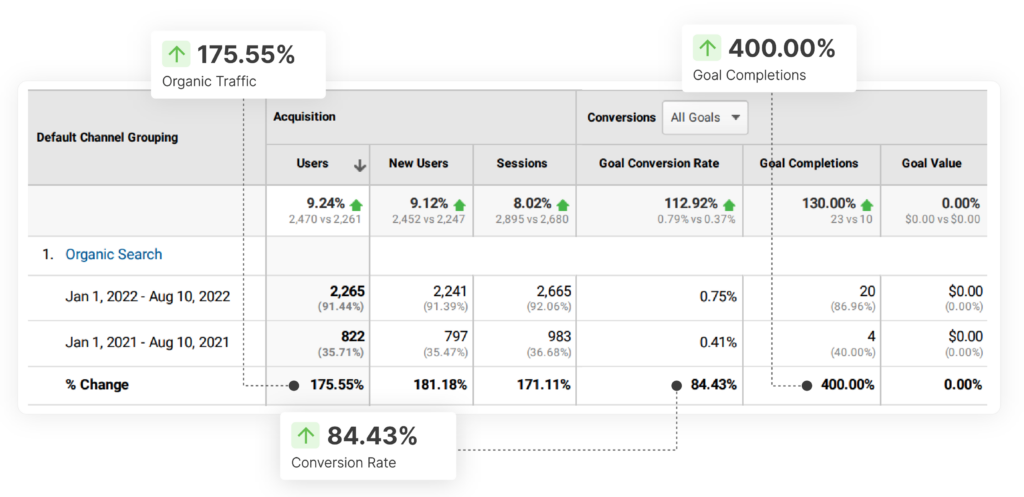

SEO for construction company

If you pick high intent keywords to rank, even a lower traffic volume can substantially increase your revenue.

These high intent keywords require hours of research to uncover. SEO services focused on cookie cutter approach will hardly have the time allocated for in-depth keyword research.

A very prominent construction company 2xed the organic traffic.

Not only did they nearly double their traffic, they also increase the amount of inquiries by 4 times.

The prominent real estate company sells houses in the vicinity of $3-4 million.

This is just the result of a single campaign for a part of their website.

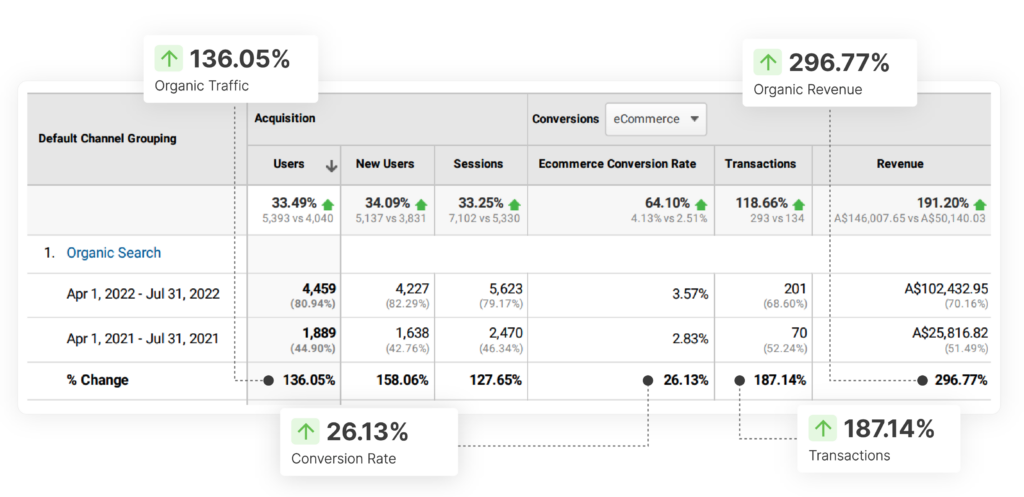

SEO for supplies company

Another supplies company increased their revenue by 3X and increased organic traffic ( SEO ) by 136%.

Their eCommerce store had never done more than $26K per month.

Our SEO campaign netted them a cool $102K per month.

SEO is incredibly powerful that can benefit all types of businesses, regardless of their niche or industry.